Apart from some additional memory consumption, which might be a small problem if the optimiser is wrong and statistics are off, I don’t see any drawback of this new feature. The feature is turned on in PostgreSQL 14. It seems that with this new feature, correlated subqueries could be rewritten to nested loop outer joins in the future? Other optimisers already do this, and we would have effectively the same feature here as Oracle’s scalar subquery caching. | -> Index Only Scan using uj on u (cost=0.29.4.38 rows=5 width=0)|Īnd the benchmark (you can paste the query into the benchmark logic yourself to reproduce) confirms there’s no memoization effect Run 1, Statement 1: 00:00:03.617562 Regrettably, the plan doesn’t show memoization: |QUERY PLAN | That’s great news! Wait, does this work also for ordinary correlated subqueries? Because the above LATERAL correlated subquery could be rewritten as: We could run a query like this:Īnd this time, we can see a significant speedup! Run 1, Statement 1: 00:00:03.419728 Across the whole system, that alone would already be worth it. On my machine, the results are consistent, decent, not too impressive, but still significant: Run 1, Statement 1: 00:00:03.763426

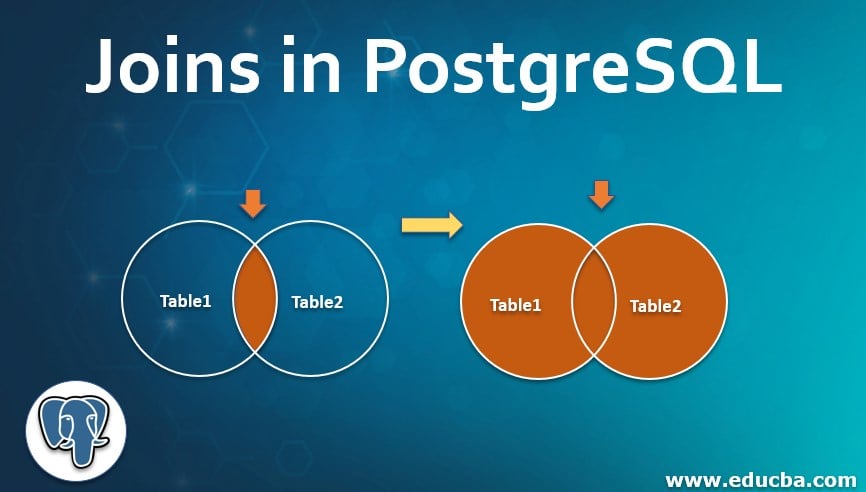

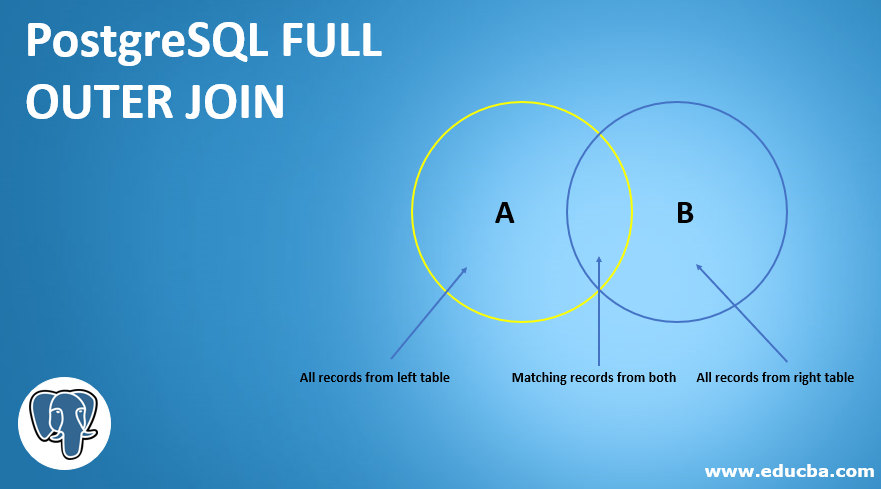

RAISE INFO 'Run %, Statement 2: %', r, (clock_timestamp() - v_ts) RAISE INFO 'Run %, Statement 1: %', r, (clock_timestamp() - v_ts) Repeat the whole benchmark several times to avoid warmup penalty You can run the following benchmark on the above schema yourself, to verify: We repeat the above 5x to mitigate any warmup and other caching effects.We repeat an operation 25x in mode A and mode B and compare (or more than 25, if it’s a fast operation).We’re using the usual benchmark technique described here: | -> Index Scan using uj on u (cost=.29 rows=100000 width=8)| When turned off, PostgreSQL seems to choose a hash join or merge join instead, on my machine (between multiple executions, the plan might switch) |QUERY PLAN | In the case of PostgreSQL’s enable_memoize, this can be particularly useful for nested loop joins in SQL, and to reference the above tweet, a lateral join is often executed via a nested loop join. If the calculation of such a function is costly, and there are only few possible input values, then why not just maintain a hash map that maps all previous input values and use that to look up known (or at least frequent) values instead of computing them again?Īs I’ve shown previously on this blog, Oracle 11g has introduced a feature called scalar subquery caching, a feature which you can activate in jOOQ to avoid costly PL/SQL context switches.

For example, no matter how many times you calculate UPPER('x'), you’ll always get 'X'. In a perfect world free of side effects (and SQL is such a perfect world, in theory), memoization means that we can substitute y for f(x) in any computation, given that y = f(x).

But will it also help with an “ordinary” join? I wanted to try myself. Improving query speed by 1000x hints at something very suboptimal having been going on before, and a tool like memoization can be of great help. memoize in PostgreSQL 14 makes one of my queries using join lateral 1000x faster □- Rasmus Porsager November 2, 2021

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed